Both the Linux TA and the Windows TA are common TAs used for data transformations. This is important because there are TAs (technical add-ons) that live on Splunkbase that are used to extract fields and transform the data. What happens with this approach, is the fact that the formatting of the logs can often get butchered in the process. As mentioned earlier, there is no sense in configuring syslog forwarding on Linux/Windows servers where their logs are already being written to a file on disk. This is not a best practice and actually violates security policy in many organizations. This means that Splunk would have to be running as root to be able to accept the traffic. Essentially, any syslog data that was sent to Splunk during the restart is just lost data, and it cannot be recovered! The other major reason we do not recommend this approach is that many syslog devices cannot change from the default syslog port of 514. Since there is no acknowledgment, the origin source assumes that Splunk received the data. Any time you add an index, onboard data, install an application or otherwise update any configuration file, the Splunk service must be restarted. First, Splunk servers have to be restarted periodically.

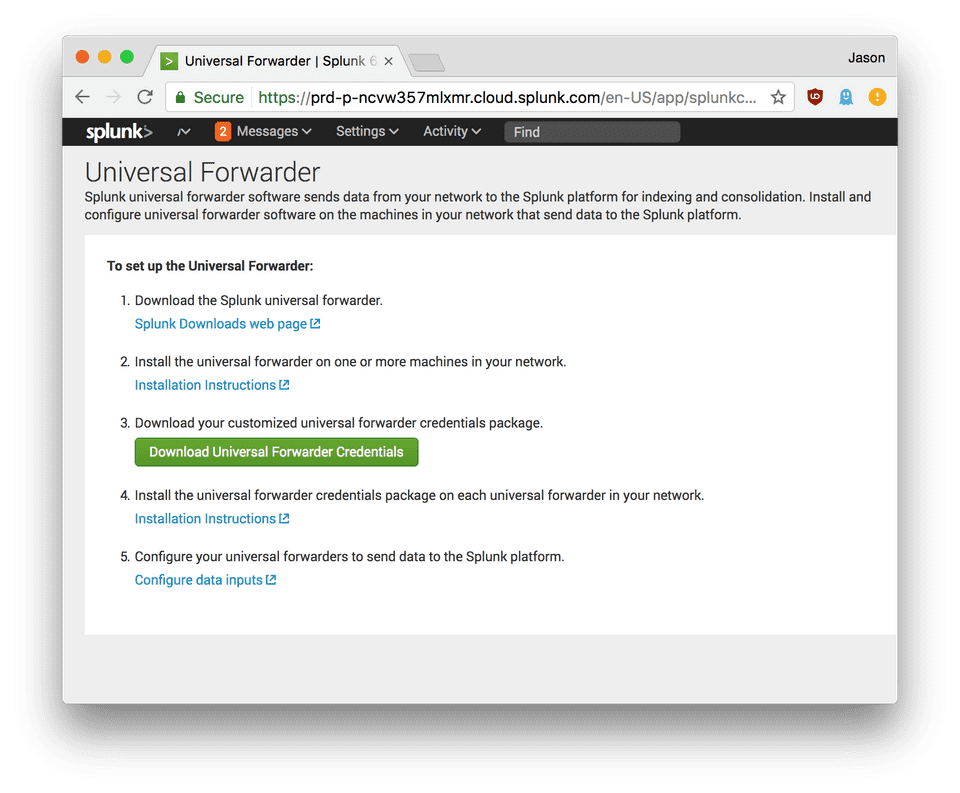

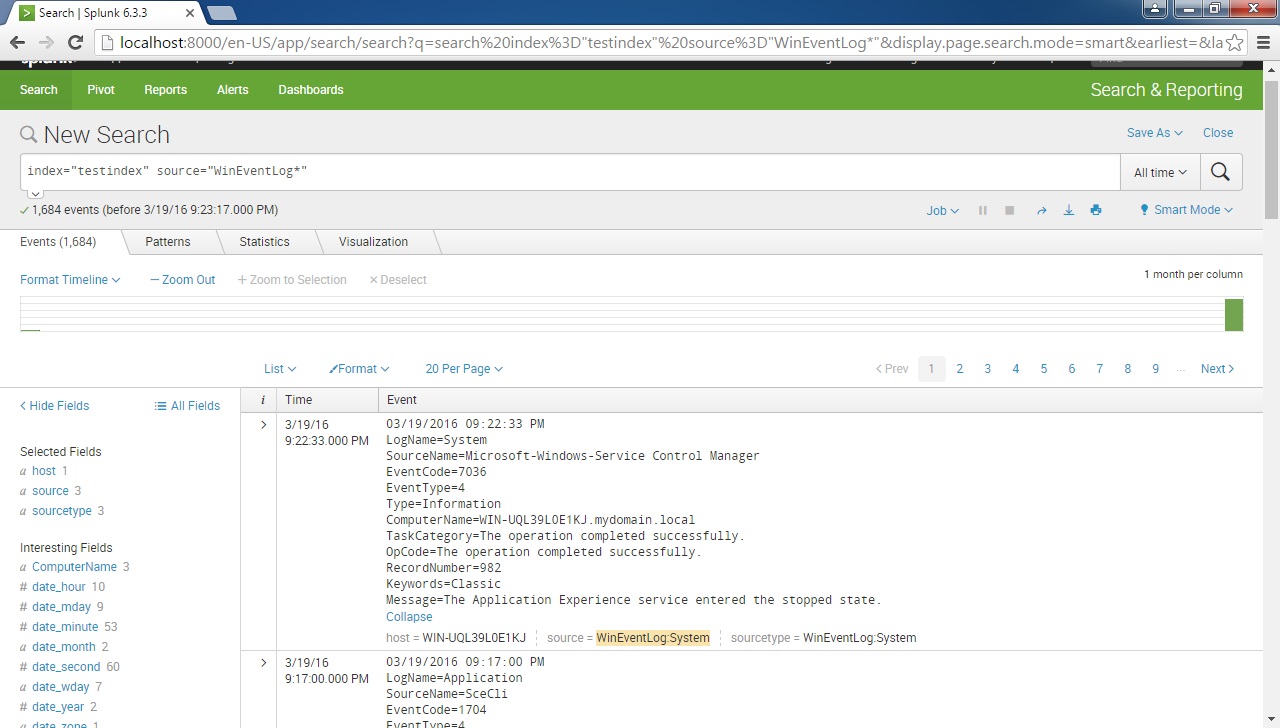

There are several reasons that this is not the recommended approach. A lot of times, a Splunk admin will open a network port specifically for syslog data on a Splunk indexer or forwarder. Sending syslog data directly to a network port opened on Splunk.,/br>With everything getting tagged with the same metadata, field extractions become difficult and sometimes impossible and you cannot make use of apps on Splunkbase for most of this data. Ultimately what ends up happening is everything coming over from the third-party application ends up getting tagged with the same Splunk metadata (host, index, sourcetype) and it becomes difficult and cumbersome to separate them. The issue with this method is the fact that it becomes very difficult to separate the original sources from each other. Network teams will configure their routers and switches to send SNMP traps to some third-party application like SolarWinds, then forward that data onto Splunk indexers from there. Sending your data to a third party via SNMP traps.What NOT to do When Splunking Your Syslog Data DO NOT send Windows/Linux syslog to a syslog server via some third-party software like NXLog. For the sake of this blog post, we are referring to data like network routers/switches/firewalls, or other applications where they natively syslog, but do not write a text log file that lives on a disk somewhere.įor Windows/Linux syslog where the log file lives on a disk, we will always recommend using a Splunk Universal Forwarder to collect that data. There are several types of devices that write to syslog. What Type of Syslog Data are We Talking About? You may be limited to a particular method due to company policy, and that’s ok. In the below post, I’ll cover some of the Do’s and Don’ts to Splunking your syslog, and for what it’s worth, every environment is different. As a Splunk Professional Services Consultant, I’ve seen many ways that customers send that data over to Splunk. And believe it or not, there are tons of ways you can Splunk that data. Syslog is plentiful in all environments, no matter what the size may be, and Splunking your syslog is something that inevitably happens, one way or another.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed